Public Health Engagement & Data Portal - System Design

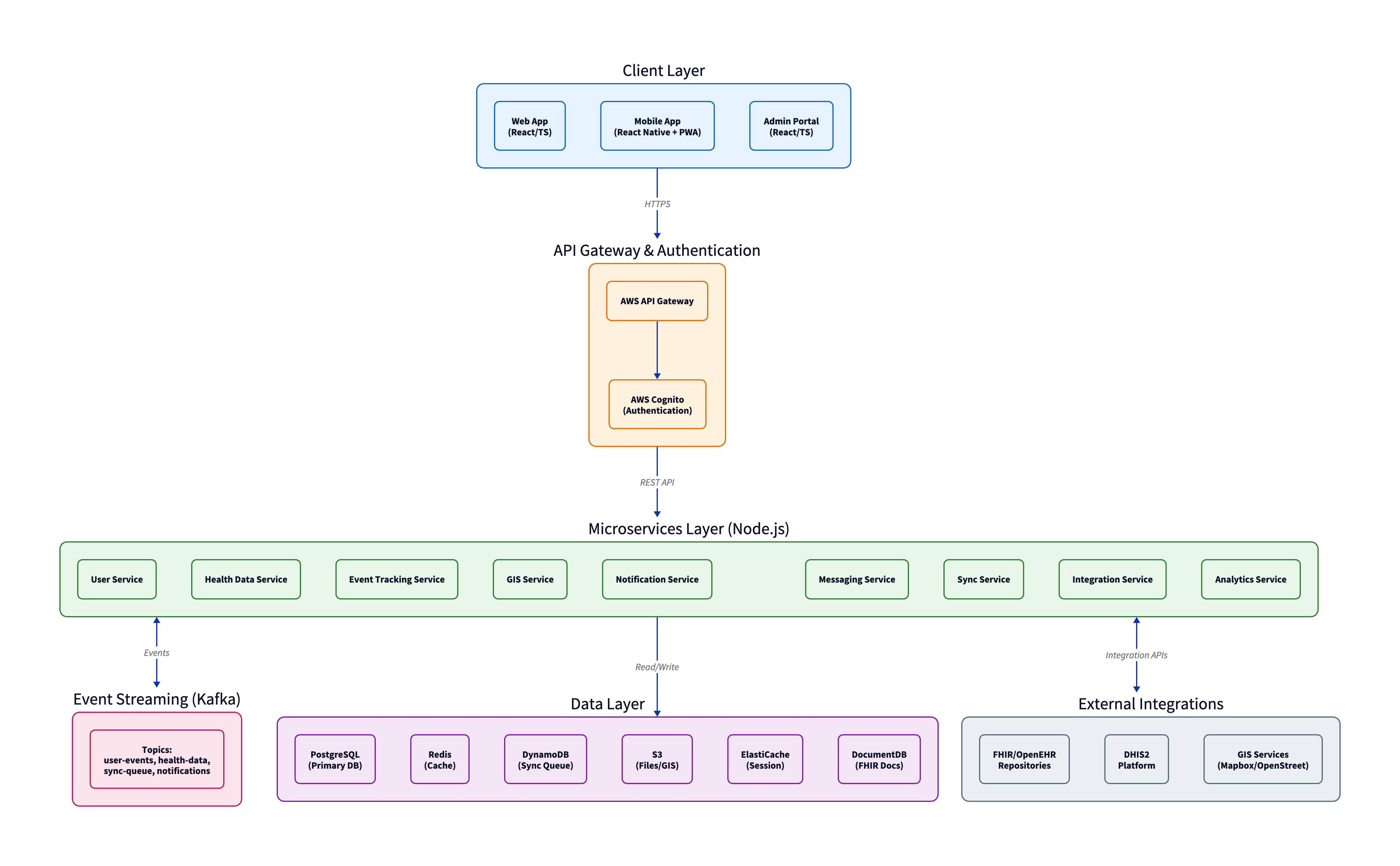

This document presents a comprehensive system design for a Public Health Engagement & Data Portal designed to address the critical challenge of unreliable connectivity in African public health programs. The system enables community health workers to record patient encounters, log intervention activities, and coordinate with teams regardless of network availability, with intelligent synchronization when connectivity returns.

Executive Summary

The platform treats offline operation as the default, not an edge case. Field workers can operate fully offline, with intelligent conflict resolution and synchronization when connectivity returns. The system integrates with national health information systems like DHIS2 and supports multiple organizations (ministries of health, NGOs, research institutions) from shared infrastructure while maintaining strict data isolation.

The design addresses real-world constraints: inconsistent power, shared devices, varying technical literacy, and the need to integrate with legacy EMR systems. The architecture follows FHIR R4 standards for health data interoperability, ensuring compatibility with modern health information systems.

Technology Stack

| Layer | Technology | Rationale |

|---|---|---|

| Frontend | React + React Native | Single codebase expertise, strong PWA/offline support |

| Backend | Node.js | Team familiarity, good async I/O for sync operations |

| Primary Database | PostgreSQL | Mature, PostGIS for mapping, row-level security for tenants |

| Sync Queue | DynamoDB | Handles burst writes during mass sync events |

| Event Streaming | Kafka | Reliable delivery for health data—guaranteed ordering |

| Cloud Platform | AWS | Best regional presence near Africa (eu-west-1, af-south-1) |

Key Architectural Decisions

Dedicated Sync Service

Synchronization is the most complex part of the system. Conflict resolution, queue management, and retry logic are isolated in a dedicated service that can scale independently. When 500 field workers come back online after a regional network outage, the Sync Service handles the burst load without impacting other services.

Kafka for Event Streaming

Health data updates require guaranteed delivery with ordering. If a patient record is created and then updated, those events must be processed in sequence. Kafka's partition-based ordering provides this guarantee. While operationally complex, the data integrity requirements justify the choice over simpler alternatives like SQS or Redis pub/sub.

DocumentDB for FHIR Resources

FHIR resources are deeply nested JSON documents that don't map cleanly to relational tables. The system stores canonical FHIR JSON in DocumentDB while maintaining searchable indexes in PostgreSQL. This dual-storage approach avoids constant transformation between FHIR JSON and relational schemas.

Offline-First Design

The system is designed assuming the client will be offline most of the time. This is the defining technical challenge:

- Every feature works without connectivity - If a feature requires real-time server communication, it's either redesigned or explicitly marked as online-only

- Local storage is the source of truth - When offline, the app reads from and writes to IndexedDB. Users don't see spinners or error states

- Background incremental sync - When connectivity appears, the app syncs in the background without interrupting workflow, only syncing what changed

Conflict Resolution Strategy

Conflicts are inevitable when multiple users edit the same record while offline. The system uses a tiered resolution approach:

Automatic resolution (most cases):

- Different fields edited → merge both changes

- Same field, same value → no conflict

- Metadata changes → server always wins

- User role hierarchy → clinician > nurse > field officer > data clerk

User resolution (when automatic resolution fails):

- Same field, different values, same role level

- Deletions conflicting with edits

- Any conflict involving diagnosis or treatment data

Multi-Tenancy Architecture

The system supports multiple organizations on shared infrastructure with strict data isolation. A ministry of health must never see an NGO's data, even if both operate in the same country.

Approach: Separate Schemas

- Each tenant gets their own PostgreSQL schema with identical table structures

- Strong isolation - a bug in a query can't accidentally leak data across schemas

- Operational costs remain reasonable compared to separate databases per tenant

- For large tenants or strict data residency requirements, dedicated databases can be provisioned

Authentication flow extracts tenant_id from JWT tokens, and all database connections are scoped to that tenant's schema. Row-level security policies act as a defense-in-depth safety net.

External Integrations

DHIS2 Integration

Most African ministries of health use DHIS2 for health information management. The integration:

- Pushes aggregate data (e.g., "47 children vaccinated in District X this week")

- Pulls reference data (facility lists, organization units, data element definitions)

- Handles the reality that every DHIS2 instance is configured differently

An abstraction layer with configurable field mappings per tenant allows manual mapping during onboarding, as DHIS2's flexibility makes full automation impractical.

EMR Systems

The system supports multiple EMR integration patterns:

- FHIR R4 APIs for modern EMRs that expose them

- HL7 v2 messages for legacy systems (via translation layer)

- Flat file imports as a last resort

The system acts as a consumer, not a producer. The EMR remains the source of truth for clinical data.

Security & Compliance

Health data security is designed as a core concern, not an afterthought:

- Encryption everywhere: AWS KMS-managed keys for databases and S3, TLS 1.3 for all connections, field-level encryption for patient identifiers

- Comprehensive audit logging: Every data access logged (who, what, when, where) with 7-year retention in immutable CloudWatch Logs

- Multi-factor authentication: Required for admin and clinician roles

- Compliance support: HIPAA (US-funded programs), GDPR (EU-funded programs), and local data protection laws with per-tenant configuration

Performance & Scalability

The system is designed to scale from pilot deployments (50 users) to national deployments (100,000+ users):

- Auto-scaling: AWS ECS with automatic capacity increases during load spikes (e.g., Monday mornings when field workers sync weekend data)

- Database scaling: PostgreSQL read replicas, DynamoDB on-demand capacity, Redis cluster mode

- Performance targets: API response time (p95) <500ms, sync initiation <2 seconds, dashboard load <3 seconds

Implementation Approach

The system will be built in five phases, each delivering usable functionality:

- Phase 1: Foundation - Core infrastructure, authentication, basic health data storage

- Phase 2: Field Operations - Event tracking, GIS, notifications, web frontend

- Phase 3: Offline & Mobile - Sync service, mobile app, offline support (the hardest phase)

- Phase 4: Integrations - DHIS2, EMR connections

- Phase 5: Polish & Scale - Analytics, performance optimization, security hardening

Total effort is estimated at 50-65 person-months, with a team of 4-5 engineers for core development, plus domain expertise and compliance support.

Complete System Design Document

The full system design document (18 sections, covering all aspects from architecture to operations) is embedded below. You can view it directly in your browser or download it for offline reading.

If the PDF doesn't display in your browser, you can open it in a new tab or download it directly.